A recent poll showed that more and more Indians think that Totalitarian Government can grow economy faster than Democratic one,

ref: http://www.pewglobal.org/2017/10/16/democracy-widely-supported-little-backing-for-rule-by-strong-leader-or-military/

https://scroll.in/article/854479/why-does-india-the-worlds-largest-democracy-love-the-idea-of-dictatorship

I believe the reason behind the fondness of Totalitarian government is due to comparisons to China, which in recent past was able to quickly improve its economy despite the population and illiteracy challenge like India.

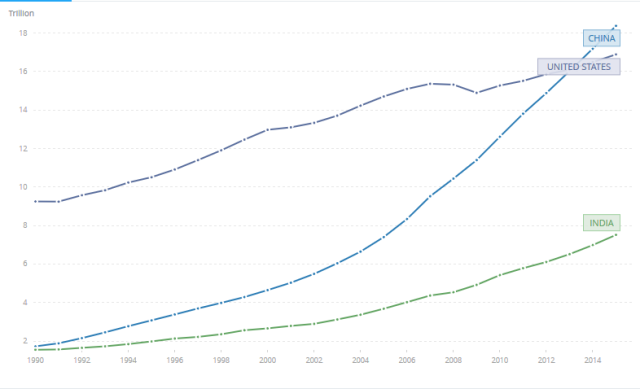

Using GDP per capita as a measure for economic progress, And using the data only till 2000 to show the major drift we can see the difference.

https://data.worldbank.org/share/widget?end=2000&indicators=NY.GDP.PCAP.CD&locations=CN-IN&start=1960&view=chart

Since BJP got the historic mandate in 2014 in Lok Sabha elections, it has managed to consolidate power in many states, it seems we are moving closer to single party ruling most of the country. People can’t stop comparing and hoping the BJP to be able to achieve the same growth that China has achieved. Small freedoms curtailed as a price for growth by a Strong Totalitarian Government is growing more and more acceptable to the people. However there are more reasons to fear the strong central government than to celebrate it.

But if you read the above graph, we can clearly see that China’s explosive economic growth didn’t started until 1978, with an exception of 1985-87 (Also discussed briefly below.)

What changed? China had got a new leader, Deng Xiaoping who had been slowly grown in the ranks of CPC (Communist Party of China). The sad part is that most people around the world have never heard about him.

Why? The story is not spicy enough.

This is why I fear, that Narendra Modi is trying to achieve the mix of work and spice,

Everybody knows about Mao, and his basic philosophies, and end up thinking China today is because of Mao’s established Communist party.

However, all Economist and Political students around the world know about Deng Xiaoping, for his reforms that turned around the fate of China.

It is easy for me to appreciate Deng Xiaoping while sitting in Suzhou Industrial Park, one of the Industrial Parks established by China in collaboration with Singapore, as an example of reforms during the last 25 years of China’s history.

I would first like to frame the distinctions between the 2 Chinese leaders, and China under them and let you decide for yourself how it relates to India.

Chairman Mao,

One of the founding members of Communist Party of China (CPC), and chairman of the party (1943-76)

He developed Five year plans to implement Soviet style central planning,

He started Giant Leap forward, for aggressive Community driven projects, and started top down application of large number of untested and unscientific techniques which caused agricultural production to fall, and led to mass famines, where millions starved to death. However, his image was never tarnished because people argued that due to top down policy structure, the figures of bad implementation on the ground never reached him and he was not informed of the extent of famines. Many lower ranking officials over reported the production numbers, that caused central planners to not allocate enough produce to the region and cause mass starvation.

In order to prevent a bad face, anyone who reported or criticized the policies impact was marked right wing populist, and sent to labor camps in rural areas.

He Started Cultural Revolution, and removed all forms of past inequalities, by aggressively removing Intelligentsia, Forcing them to work in fields and learn from the peasants. In a way he admonished the old system as a failure, to start a new system, a new china from the ground up.

Though there were a lot of bright sides to his rule,

Under him the economic inequalities of the past were largely dissolved, everyone had to start from the ground, as Government owned all property.

Position of women in China was elevated to be equal with men in all forms.

People were forced to shed past orthodox approaches.

He spent a lot of effort and creating and maintaining his public image, he published quotations from Chairman Mao, in Little Red Book, and everyone was encouraged to carry it with them.

His ideology was published widely and taught in school etc.

His body is preserved in Mao Mausoleum in Tiananmen Square in Beijing. On visiting the place, it looked similar to a Temple visit in India, and outside the Mausoleum, are shops with Statues, photos and necklaces of Mao, along with pictures of his writings. His image is of a larger than life leader in China’s history.

Deng Xiaoping.

He was the Paramount leader of CPC for 1978-1992.

He is considered one of the central pillars of economic reform in China which led to the double digit economic growth of China for many years, He is credited for economic liberalization.

https://data.worldbank.org/share/widget?end=2016&indicators=NY.GDP.PCAP.KD.ZG&locations=CN-IN&start=1962

As soon as he came to power, one of the first steps he took were to launch so called Beijing Spring that allowed open criticism for the excesses and suffering during the previous period.

Deng personally commented that Mao was “seven parts good, three parts bad.” acknowledging his legacy, and importance in China’s History, while acknowledging shortcomings.

He moved from centrally planned Commune models (i.e. Community based industries), to market oriented model, opened up china to global standards, and allowed greater flexibility to local entrepreneurs.

He launched the ideology of Socialism with Chinese Characteristics. He famously said “it doesn’t matter whether a cat is black or white, if it catches mice it is a good cat”.

He favored experimented driven approach, and much of the reforms in his time, did not originate from him, but were supported by him by expanding successful experiments to larger areas.

One famous one is the Household responsibility System, where instead of Agriculture produce completely handled by the Commune, the land was divided into 18 households that were assigned minimum quota’s to meet, and any excess could be sold in the market. The arrangement was first developed in secret in a village, but it led to great gains in productivity of farms. On finding out about this, it was openly praised by Deng Xiaoping, and was later expanded nationwide.

In 1987-89, the government had planned major price reform, where they deregulated price controls in major areas of economy, and move them to market driven price rates. The fast paced reforms caused a market run, where people started to buy in advance the products whose price was expected to increase in the future, this caused high inflation, and Government was forced to ration few items. The major impact was seen from March 1988. By October 1988 Government had acknowledged the failure of the reform, and brought back the price controls on major commodities, (30% of agriculture output was subject to price controls).

Ref:http://documents.worldbank.org/curated/en/582991468015853321/Price-reform-in-China

Ref: https://www.amazon.com/Chinas-Megatrends-Pillars-New-Society/dp/0061859443

He voluntarily resigning from top party positions in 1992, and established a new norm of 2 term presidents.

He continued to use his public image when he toured the South China to encourage entrepreneurship.

There were also many controversial decisions taken during Deng’s leadership including

One Child Policy, where massive fine was levied on people with more than 1 child, with exceptions to ethnic minorities and 1st girl child in some regions,

Strike Hard Anti-Crime campaign, where quotas were set for executions of criminals say 50,000 per year etc.

And Tiananmen Square protest.

So, is the China model good for India? depends on the leader.

Although the policies of Modi Government are mostly reform oriented, the Branding and Structure around it, is not.

Here is why I think Narendra Modi is trying to be more like Mao (Downsides of China Model), the Deng Xiaoping (Reason for Economic Progress)

1- Branding and Image: Like Chairman Mao, BJP had focused largely on branding a single person as the source of all that is good, with stories being planted in the news, to anchors praising Modi, and publication of Bal Narendra, Man Ki Baat, Making his speeches compulsory for school students etc. making Modi a larger than life figure to be celebrated.

This approach is dangerous because any criticism, however well intentioned makes Modi’s image human. Which doesn’t meet the overall grand picture, hence needs to be avoided at all cost.

2- Us vs Them mentality: To protect the Modi Brand, any criticism is being branded by the Media as Anti-National to alienate the critic from the reader’s psyche. This has great similarities to the Cultural Revolution, where any Anti-Mao sentiment was declared Capitalist.

3- Revolution vs Reform: Statements like there had been no development in last 60 years match more like a first time revolutionary regime, than a continuation of Reformist regime.

This however false gives a narrative as a Savior, as compared to another Soldier in the ongoing fight.

This is dangerous because Revolution allows breaking old structures with impunity, as this is the new India, anything that government changes is okay.

Reform is a more careful process, where there is a requirement for progress, hence scrutiny.

4- Making Top Down decision, rather than Bottom up experimentation with results: This gives the order from top as unquestionable commandment, and setting blanket quotas to achieve, creates a bad framework for reform.

E.g. Enforcement of using toilets by setting quotas increased toilet use but was enforced using questionable methods by officials like taking photo and shaming people doing toilet in the open, to not providing ration for those who don’t follow the directive.

The worst case scenarios are of course Demonetization and GST.

6- Not accepting Mistakes: This is one of the key issue for BJP, and biggest sources of Opposition rhetoric. Actions like Demonetization, and Applying GST within 1 month of initial experimentation with the software. There is a lot of good implications of the reform policies, but the failure to accept issues/mistakes and rolling back order is problematic.

The corrections kept happening on the fly, which caused huge confusion, loss to economy, many Small and medium scale industries were closed causing loss of employment, and of course, Loss of Life.

“Acknowledging a mistake is a commitment to not repeat it.”

If a reform oriented government fails in a reform, which is bound to happen with experimentation, accepting the failure gives the people a hope that they consider themselves accountable, and won’t repeat the same, As done by Deng Xiaoping.

Not accepting the mistake, and keeping the Rhetoric, brings the fear in people’s minds that they will do more such experiments with impunity.

The BJP government had won the mandate, on many reasons, but I think the primary reason was to bring economic progress using Gujrat Model.

The Demonetization exercise, and failing to admit its mistake seems to have eroded that confidence in the government. And now, 2019 doesn’t seems to be comfortable win for BJP as it was expected. The new Budget shows the nervousness of the government for more reforms.

One of the main benefits of Single party rule in China, is long term strategy that doesn’t change due to change in leadership.

Modi Government could have achieved that stability with the mandate it had got, had it followed the Deng’s Model of development,

It seems like a lost opportunity for growth.

The science has concerned itself with solving many problems of the world, there is a reason why mathematics is considered as the purest science,

The science has concerned itself with solving many problems of the world, there is a reason why mathematics is considered as the purest science,